Spectral Enhancement

The audio and video recordings of the kind of events we analyse are typically not of studio-quality production. They are often captured using low-quality audio recording devices, such as cellphones. Moreover, the people recording such incidents often do so from a safe distance, resulting in the focal event being relegated to the background of the recording.

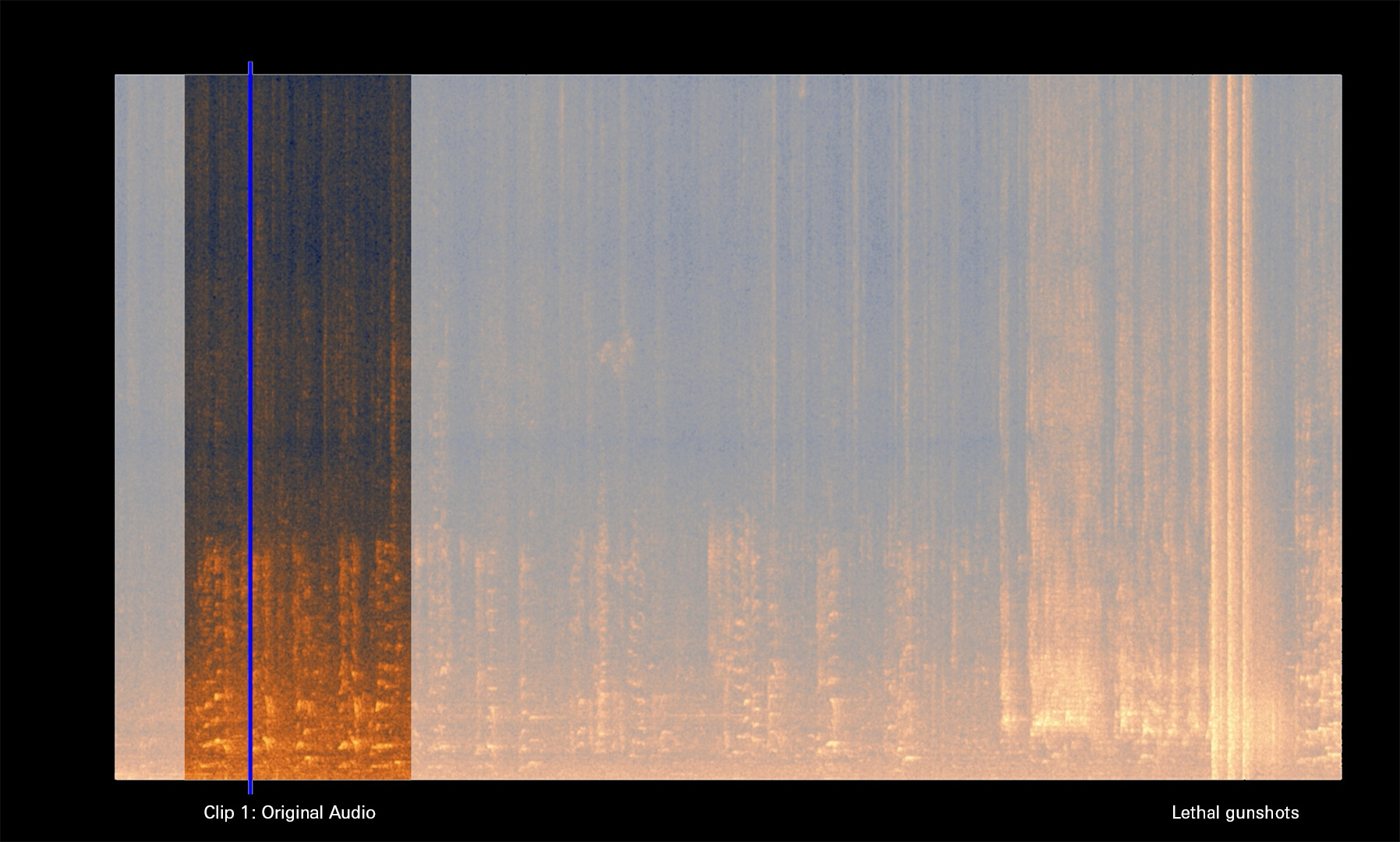

To address this challenge, we’ve developed “spectral enhancement” techniques that refocus the acoustic perspective, zeroing in on the vital audible traces. Our enhancement techniques combine manual and adaptive machine learning procedures. They effectively isolate specific portions of the frequency spectrum, eliminate background noise, and amplify the audio signal, ultimately rendering the recording as clear and intelligble as possible.

Spectral enhancement for the killing of Sammy Baker